Refining reporting guidelines using behaviour change theory

The lasting legacy of most medical research is the written account. When writing up their work, researchers often omit information that readers — including clinicians, reviewers, patients, and other researchers — need to fully understand, appraise, replicate, or apply the research. Reporting guidelines try to solve this problem. They are community-created recommendations of information to include when writing up research so that everybody can use it. The first reporting guideline was created almost 30 years ago, and many more have been created since. Most leading medical journals ask authors to adhere to reporting guidelines, yet adherence remains low in almost all medical fields. When authors do not adhere to reporting guidelines their work is less transparent, less valuable, contributes less to patient outcomes and has potential to inadvertently harm by distorting the evidence base. The aim of my thesis is to understand why authors do not adhere to reporting guidelines when writing up medical research, and to address these reasons with the intention of increasing adherence in the future.

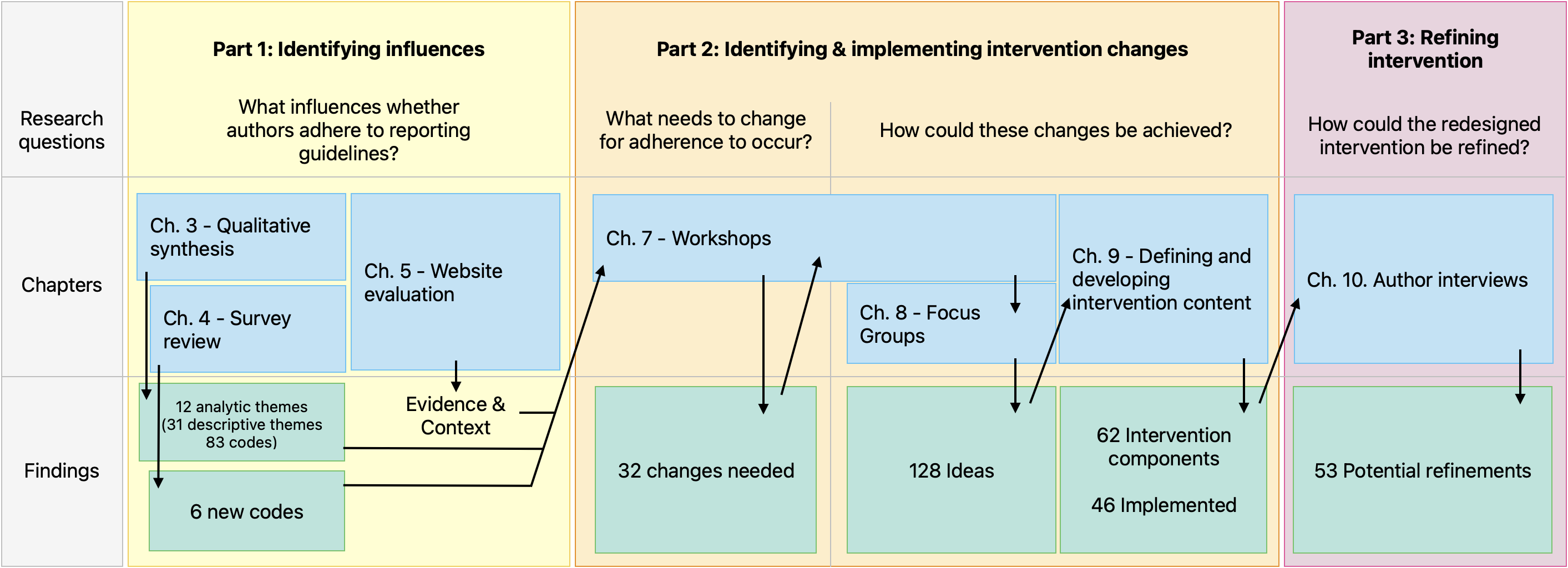

I began my thesis with this clear aim but without a route to get there. My first step was a qualitative synthesis (chapter 3) of research exploring authors experiences of reporting guidelines, and I discovered authors’ adherence to reporting guidelines is influenced by features of the guidelines themselves, but also the system that surrounds them including the websites that disseminate them and the people and policies of the broader scholarly ecosystem. I probed this system further with a review of quantitative surveys and an evaluation of a key dissemination website (chapters 4 & 5).

Given the complexity of the system and the complexity of the behaviour in question, I realised I would need a framework to help me make sense of them. I decided to use the Behaviour Change Wheel (chapter 6), a well established and evidenced framework for designing and defining complex behaviour change interventions. I led workshops and focus groups (chapters 7 & 8) to guide stakeholders through frameworks’ steps and, ultimately, ended up identifying 32 influences that needed to change, and 128 ideas of how to change them. These ideas were applicable to guideline developers, the EQUATOR Network, journals, publishers, funders, ethics committees, and institutions.

I decided that redesigning reporting guidelines and EQUATOR’s website would be an effective, affordable, acceptable, and equitable way to realise many of the generated ideas. I defined 63 intervention components and managed to incorporate 46 into a prototype website that included a redesigned reporting guideline (the Standards for Reporting Qualitative Research) and the EQUATOR Network’s home page (chapter 9). I then explored authors experiences of using this prototype through in-depth interviews, observation, think aloud, and writing appraisals (chapter 10) and I identified 53 possible areas for improvement.

My key outputs include a design blueprint for user-friendly reporting guideline resources, and an online platform for creating and hosting resources following this blueprint. This platform will benefit guideline developers who typically lack the money, time, and skills to create user friendly resources. I have secured funding for a feasibility study and parallel process evaluation to explore the acceptability and usability of this platform amongst authors submitting to journals, in preparation for a future evaluation to determine whether my redesign leads to more authors adhering to reporting guidelines successfully.

In this chapter I introduce the evidence gap I have addressed and the approach I have taken to address it. I begin by describing the prevalence and consequences of poorly reported medical research. I introduce reporting guidelines and position them as part of a complex behaviour change intervention. This intervention has had a disappointing impact — few authors adhere to reporting guidelines and medical research is still poorly reported. This brings me to my evidence gap: how can we get more authors to adhere to reporting guidelines? I then outline my aims, objectives, and thesis structure.

0.1 The problem of poor reporting in health research

Facing an uncertain choice during treatment for multiple myeloma, epidemiologist Alessandro Liberati wrote “Why was I forced to make my decision knowing that information was somewhere but not available?” [1]. When I started my DPhil governments may have been asking the same question. The world was in the grip of COVID-19 and decision makers were wading through a deluge of patchy research articles missing important information [2]. In the years since, members of my family have had to make treatment decisions where the evidence is of “low certainty” because key details are missing from research articles.

A selfish silver-lining of these tumultuous years was that my family and friends finally understood the problem my thesis addresses: when medical researchers inadequately describe what they did, why they did it, and what they found, other people cannot understand, replicate, or use their work. Research costs huge amounts of time, money, and effort, and the written account is typically its sole legacy. When details are omitted they are lost. The remaining gaps are sources of doubt; are they accidental omissions? Oversights? Cover-ups? Whatever their source, the gaps fragment the full picture, and the potential value to patients drains away.

Early concern over reporting quality came from frustrated reviewers unable to find the data they needed within research reports. For example, in 1963, Glick [3] found many reports of psychiatric therapies used ambiguous descriptions of treatment duration like “at least two months” or “from one to several months”. These descriptions were so vague they were “unsuitable for comparative purposes”. In the decades since, evidence syntheses have increasingly underpinned clinical guidance, and the Cochrane Collaboration and the National Institute for Health and Care Excellence (NICE) are two organisations conducting such syntheses. Their reviews influence clinical practice throughout the UK and globally, but are undermined when the underlying literature omits details. Dechartres et al. [4] looked at all Cochrane reviews published between 2011 and 2014. These reviews included over 20,000 randomised controlled trials. A third of these trials were so poorly reported, reviewers could not judge their potential for bias thereby limiting the confidence of conclusions. Some researchers fear that unclear bias may actually be high-but-hidden bias. For example, Savović et al. [5] found exaggerated intervention effect estimates in randomized trials with high or unclear (versus low) risk of bias judgements. Poor reporting, therefore, not only undermines the confidence of systematic reviews but potentially allows distortions to creep into the evidence un-checked.

No similar data exists for NICE reviews, so instead I’ll draw on personal experience of consulting NICE evidence when a relative was deciding between two cancer procedures. Both options had serious side effects, similar success rates, and neither had a clear-cut advantage given my relative’s medical history. To respect my relative’s privacy I won’t cite the NICE review, but it included ten studies: seven case series, two non-randomised comparison studies, and one randomised trial. Two studies did not describe participants’ gender, one did not describe ages, four did not describe their exclusion criteria, three did not describe side-effects fully. Consequently, NICE’s recommendation was less certain than it could have been. Did the case series include patients similar to my relative? We couldn’t tell. Did participants of equivalent age experience the side effects we feared? No idea. My family echoed Liberati’s frustration: the missing information was probably collected at some point and we (as tax payers) may even have paid for some of that research, but because details were not written down they would not help us in our hour of need. In typical scientific objectivity, Dechartres wrote [4] “waste related to poor reporting could be completely avoided”, but it’s hard to remain so dispassionate when tossing and turning in the small hours of the morning, deliberating a life-changing decision, when details matter more than ever.

Two of the studies in that NICE review barely described the procedure in question, so had the surgeon wished to repeat it for my relative he wouldn’t have been able to. This reveals another consequence of poor reporting: when research is poorly described, it cannot be repeated. Doctors and service providers need clear descriptions to replicate interventions [6]. As Feinstein noted in 1974 [7], it is difficult enough for a clinician to understand the value of unfamiliar procedure, but “it is much more difficult when he is not told what that procedure was”. For example, Davidson et al. [8] reviewed trials describing exercise interventions for chronic back pain and found authors often did not describe interventions sufficiently for other healthcare providers to copy them. Researchers also need clear descriptions to understand, appraise, and repeat each others’ work. For example, Carp [9] described how a third of 241 brain imaging studies missed information necessary to interpret and repeat them, like the number of examinations, examination duration, and the resolution of images.

As well as being wasteful, poor reporting breeds mistrust. The COVID pandemic saw a deluge of research, yet of 251 trial reports [10], only 14% described harms adequately; fifty five trials (22%) did not report any information about harms, and most others (n=150, 60%) did not explain whether harms resulted in participants dropping out. Fear over possible side effects stopped many people from getting vaccines [11], seeking medical treatment [12], or wearing masks [13]. By not reporting side effects, trial reports did nothing to quell those fears and, in some cases, may have stoked them further. Even years later, in April 2024, a British member of parliament describing himself as “double vaccinated and vaccine-harmed” [14] told the House of Commons that the “adverse effects of the [Covid-19] vaccine…are not currently known”, before speaking of “gaslighting” and blaming the vaccine as causing “enormous harm”. He repeatedly railed against an omission of information about harms — “crucial information was hidden from the regulator and the public”, “The public are being denied that data… yet again, data is hidden with impunity” — before jumping to un-evidenced, exaggerated, or conspirational claims of harm and cover-ups. The absence of evidence seemingly left a void for fantasy and fear to fill.

These are a mere handful of many studies documenting poor reporting in medical literature. A 2023 systematic review found 148 published between 2020-2022 alone [15]. All investigated reporting quality in different medical research disciplines, and almost all concluded reporting was sub-optimal. Hence, poor reporting is a long-standing problem, plagues all disciplines, devalues research, seeds mistrust in science, introduces avoidable doubt and patient distress, and derails the uptake of new knowledge into clinical practice.

0.2 Reporting guidelines to the rescue?

Concern over reporting quality crescendoed through the eighties and early nineties as systematic reviews became more common. Responding to calls for “strategies”, “guides”, and “lists” to help authors prepare their manuscripts, the Standards Of Reporting Trials (SORT) [16] and Asilomar [17] guidelines were published in 1994 by two independent groups of methodologists, trialists, and editors. Both advised on how to report randomised trials and had similar content. On the suggestion of JAMA’s deputy editor, the SORT and Asilomar groups met in 1996 and combined their recommendations to create the CONsolidated Standards of Reporting Trials (CONSORT) statement [18]. CONSORT is a set of recommendations detailing what information authors should include in clinical trial reports. It comprised an article describing how it was made, a checklist, flow diagram, and (after an update in 2001) and ‘Explanation and Elaboration’ publication [19–21].

CONSORT proved influential, and other groups quickly developed guidelines for different research types. Reporting guidelines are like a theme and variations, where CONSORT forged a path others have followed with varying fidelity (See Table 5.5). Most have acronym names. Most were first published as a journal article describing their development. Some, but not all, have checklists and elaboration documents. Some guideline developers publish resources as separate documents, others put them all into a single journal article. Guidelines are developed by different groups, with different compositions (possibly including methodologists, editors, clinicians etc.) and in different ways (e.g., some by Delphi consensus [22]). Although most follow CONSORT’s approach of presenting recommendations focussing on reporting above conduct, guidelines differ in how forceful their recommendations are and whether they also seek to influence design.

| Guideline | Definition | Study type | Year | Resources |

|---|---|---|---|---|

| CONSORT | CONsolidated Standards Of Reporting Trials | Randomised controlled trials | 1996 [19], updated in 2001 [20], and 2010 [23] | Development article Checklist Explanatory document Flow diagram Website COBWEB writing tool[24] |

| PRISMA | Preferred Reporting Items for Systematic reviews and Meta-Analyses | Systematic Reviews and Meta-Analyses | 2009 [25], updated in 2021 [26] | Development article Checklist Explanatory document Flow diagram Website |

| PRISMA-P | Preferred Reporting Items for Systematic review and Meta-Analysis Protocols | Protocols of systematic reviews and meta-analyses | 2015 [27] | Development article Checklist Explanatory document Website |

| ARRIVE | Animal Research: Reporting of In Vivo Experiments | Publications describing research involving live animals | 2010 [28], updated in 2020 [29] | Development article Checklist Explanatory document Website Action plans Compliance questionnaire |

| SRQR | Standards for Reporting Qualitative Research | Qualitative health research | 2014 [30] | Development article Checklist (not editable) Explanatory document as supplementary material |

| STROBE |

Strengthening the Reporting of Observational Studies in Epidemiology | Observational Epidemiology | 2007 [31] | Development article Checklists (four versions: cohort, case-control, cross-sectional, and combined) Explanatory document Website |

| SPIRIT | Standard Protocol Items: Recommendations for Interventional Trials | Protocols of clinical trials | 2013 [32] | Development article Checklist Explanatory document Website |

| CARE | CAse REport | Case Reports and data from the point of care | 2013 [33] | Development article Checklist Explanatory document Website Training course [34] Writing application [35] |

| STARD | STAndards for Reporting Diagnostic accuracy | Studies of diagnostic accuracy | 2003 [36] and revised in 2015 [37] | Development article Checklist Explanatory document |

| AGREE | Appraisal of Guidelines, Research and Evaluation | Reporting clinical practice guidelines | 2016 [38] | Development article Checklist Website |

| SQUIRE | Standards for QUality Improvement Reporting Excellence | Quality Improvement in Health Care | 2008 [39] and revised in 2016 [40] | Development article Checklist Explanatory document Website Glossary |

| CHEERS | CHecklist and Explanation and ElaboRation taSk force report | Economic evaluations | 2013 [41] revised in 2022 [42] | Development article Checklist Explanatory document |

| TRIPOD | Transparent Reporting of a multIvariable prediction model for Individual Prognosis Or Diagnosis | Studies developing, validating, or updating a prediction model, whether for diagnostic or prognostic purposes | 2015 [43] | Development article Checklist Explanatory document Website Adherence assessment form |

| RIGHT | Reporting Items for practice Guidelines in HealThcare | Practice guidelines in healthcare | 2017 [44] | Development article Checklist (not editable) Explanatory document as supplementary material Website |

| COREQ | COnsolidated criteria for REporting Qualitative research | Interviews and Focus Groups | 2007 [45] | Development article Checklist (not editable) |

0.3 The EQUATOR Network unites the reporting guideline movement

As reporting guidelines grew in number and the problem of poor reporting gained recognition, the late Professor Douglas Altman, a leading medical statistician and evidence synthesiser, saw the need to catalogue reporting guidelines and form a community. He united academics from around the world to form The EQUATOR Network, often simply called EQUATOR, standing for Enhancing the QUAlity and Transparency Of health Research. It was the first coordinated attempt to combat poor reporting systematically and on a global scale. One of EQUATOR’s core objectives was to create a database of reporting guidelines, accessible via their website where researchers will also find training and information about developing guidelines.

There are now over 500 reporting guidelines, representing the collective work of thousands of academics. The best-known guidelines are endorsed by large numbers of medical journals and the International Committee of Medical Journal Editors, and are amongst the 1% most highly cited publications indexed by Web of Science [46].

0.4 Reporting guidelines are part of a complex behaviour intervention

The publishing community began to take note. The International Committee for Medical Journal Editors encouraged journals “to ask authors to follow [reporting] guidelines” [47]. Concerned editors sought ways to adopt reporting guidelines, and more and more journals [48,49] have since found a range of strategies to introduce reporting guidelines into their policies, outlined in Table 2. There is variation in the degree of enforcement (from passive recommendation through to compulsory enforcement) and variation in the guidelines subject to the policy; PRISMA and CONSORT and more commonly enforced than other reporting guidelines, and many reporting guidelines in EQUATOR’s library are not enforced nor endorsed by any journals. Instead of listing reporting guidelines by name, many journals keep their instructions vague and merely recommend authors find an appropriate guideline on EQUATOR’s website. To my knowledge, no journal explicitly advises against using a reporting guideline.

| Policy | Example |

|---|---|

| Enforcing adherence | An editor or peer reviewer checks the article body for reporting guideline adherence and asks the author to add missing items. |

| Requesting peer reviewers use reporting guidelines | Editors ask peer reviewers to consider reporting guideline adherence as part of their review. Some editors may supply the reviewer with the relevant checklist. |

| Enforcing checklist submission | Editorial staff require authors to submit a completed reporting checklist as part of manuscript submission. Some journals may refuse to process a submission when the checklist is missing. Some journal submission systems may include fields for authors to upload their checklists, whereas other journals may expect authors to upload checklists as a supplementary file. |

| Journal endorsement | The journal’s instructions to authors recommends authors follow reporting guidelines. Guidelines may be specified, in which case journals may link to guideline specific websites, to the guideline publications, or to the EQUATOR Network website. Sometimes journals include a general statement but do not name guidelines, instead referring authors to the EQUATOR website with an instruction to follow “relevant guidance”. |

| Publisher endorsement | Sometimes reporting guideline policies are set at the level of the publisher, as is commonly done for editorial policies. Individual journals may point authors to their publisher policies. |

| No policy | Journals have no policies regarding reporting guidelines. |

Other stakeholders have begun incorporating reporting guidelines into their policies. Conferences like the Peer Review Congress ask applicants to use reporting guidelines when writing their abstracts. MedRxiv, a large preprint server, asks authors to declare they have “followed all appropriate research reporting guidelines, such as any relevant EQUATOR Network research reporting checklist(s)” [50].

EQUATOR have developed training programmes based on reporting guidelines. The training covers different ways to use reporting guidelines, including drafting manuscripts, checking manuscripts you have written, and appraising the reporting of someone else’s manuscript. Researchers have developed writing software to help authors apply reporting guidelines when drafting [51,52], online applications to facilitate checklist and flow diagram completion [53,54], and resources for reviewers to check compliance [55].

Hence over the years, a system has organically grown around reporting guidelines, driven by separate groups of people doing what they felt was sensible. This system includes the guidance resources themselves (the publications, checklists, flow diagrams), the websites that host those resources (guideline websites, the EQUATOR website, publisher’s websites), organisations that promote or enforce their use (EQUATOR, publishers, ICMJE, etc.), and staff at those institutions (researchers, editors, reviewers, etc.). These components all have the same aim: to influence what information researchers include in their articles.

As the Medical Research Council notes, a system with multiple components (like those listed above) is one of many hallmarks of a complex intervention: “an intervention might be considered complex because of properties of the intervention itself, such as the number of components involved; the range of behaviours targeted; expertise and skills required by those delivering and receiving the intervention; the number of groups, settings, or levels targeted; or the permitted level of flexibility of the intervention or its components.”. The reporting guideline system exhibits these sources of complexity, as described in Table 3.

| Source of complexity | Example |

|---|---|

| Number of components involved | Reporting guidelines often consist of guidance documents, checklists, and flow diagrams, and other tools. These are disseminated through websites, publishing platforms, submission systems, and they are endorsed and enforced by stakeholders including publishers, the EQUATOR Network, conference organisers, and pre-print platforms. |

| Range of behaviours targeted | Guidelines comprise “reporting items”. Some items are relatively simple, like asking the author to specify their study design in the title. Others are harder, perhaps because they require time, expertise, or prerequisite tasks. For instance, some items may require authors to have conducted their study or analysis in a certain way, or to have collected particular information. |

| Expertise and skills required by those delivering and receiving the intervention | Academics from a particular field write reporting guidelines for their peers (as opposed to a lay audience), and so authors, editors, and reviewers must have sufficient expertise to use them. |

| The number of groups, settings, or levels targeted | Groups: Users of reporting guidelines differ in their field of expertise, their experience, place of work. Settings: Although mostly written with authoring in mind, most guideline developers may also hope their resources are used by editors or peer reviewers for checking or appraising research articles. Levels: Guidelines are written with individuals in mind, but their efficacy is generally measured at group level (e.g., articles from a particular field published in a period time). |

| Flexibility of the intervention or its components | There is variation between guideline content, resources, and the implementation strategies that development groups, publishers, and other stakeholders employ. |

Viewing reporting guidelines as part of a complex behaviour change intervention may seem novel. Researchers often call reporting guidelines “tools” or “strategies” (e.g., [56,57]). In their history of the EQUATOR Network [58], co-founders Douglas Altman and Iveta Simera refer to reporting guidelines as “resources” that “influence” reporting, but do not call them interventions. However, I believe my perspective is not radical and I will now outline studies exploring the efficacy of reporting guidelines and argue they too take a systems perspective although they seldom acknowledge it explicitly.

0.6 Evidence gap: What more could be done to improve guideline adherence?

Some of the articles I have cited end with rallying cries like “major improvements need active enforcement” [71]. It would be tempting to look at Pandis’ results as support for heavy editorial enforcement being the best option, but this approach may not generalise to other journals and other guidelines. The dentistry journal in this study was small. Only 23 manuscripts underwent this treatment over 2 years, and despite giving “30 to 60 minutes” of editorial attention to each manuscript, not all completely adhered to CONSORT. The study authors admit the benefits should be “considered in the light of the additional time requirement and need for greater editorial input during the peer review process”.

Other articles have called for lighter forms of enforcement; “We need to promote more active implementation, such as submission of the checklist with the manuscript” wrote Dechartres [4], and “it is not sufficient for journals to simply recommend the use of STREGA to authors in the authors’ instructions; instead, journals should require submission of the STREGA checklist together with the manuscript” wrote Nedovic [61]. But the PLOS One [66] and BMJ Open studies [54] found little effect of checklist completion on reporting quality. Additionally, the PLOS One study found that enforcing checklists, although less burdensome than the editorial enforcement described by Pandis, still came with costs to both editors and authors and significantly prolonged publication times. Peer reviews focussing on reporting might help [68] but is relatively unexplored as an option and may be unacceptable to reviewers.

These studies all focussed on different methods of enforcement. Some include incidental findings hinting at areas-for-improvement unfixable by enforcement alone. For example, because reviewers assessing adherence in the PLOS One study [66] did not always agree or fully understand the guidance, the study authors suggested refining the guideline’s “content” and “perceived clarity”. In the WebCONSORT study, [24] Hopewell et al. made some guesses for why their intervention failed. They had to exclude 39% of manuscripts because editors had incorrectly identified them as randomised trials, and a quarter of authors selected inappropriate extensions. As a solution, they suggest a tool to help authors and editors identify study types. The study authors also raised other hypotheses to explain why their intervention failed: perhaps the custom combined checklists were too long, unclear, or perhaps giving feedback during manuscript revision was too late.

Neither the PLOS One study [66] nor the WebCONSORT study [24] explored these incidental findings. Both may have benefited from a qualitative component to understand why the interventions were not working. In our BMJ Open study [54] we surveyed authors after they completed checklists. Many reported finding the checklist too long, confusing, or irrelevant. However, because we used a multiple choice question with a (small) box for a free text answer, and because we did not survey authors if they did not complete a checklist, our results were fairly superficial.

Together, these incidental findings suggest authors may face challenges when trying to use reporting guidelines that require solutions beyond enforcement, and some groups have tried to address these challenges. Noting the outcome assessors’ confusion in the PLOS One study, ARRIVE’s developers took steps to refine its clarity when they revised it [72]. They also decided to prioritise items to make the guidance quicker to apply. Hopewell et al. [24] created WebCONSORT because they worried that combining CONSORT with its extensions may be “cumbersome and difficult” without providing evidence for this claim. In 2018 I worked with the UK EQUATOR Centre as a freelance developer to create GoodReports.org, a website where authors could answer a questionnaire to find the right guideline, and then complete the checklist online (previously, some reporting guidelines came with non-editable PDF checklists).

These innovation efforts shared limitations. None took steps to identify barriers thoroughly, and by focussing on a few barriers they may have neglected others or introduced new ones. For example, in trying to make combining checklists easier, WebCONSORT may inadvertently made checklists longer, and increased the risk of authors selecting inappropriate guidance. Secondly, these studies did not systematically consider options to solve those barriers. For example, ARRIVE’s development team decided to prioritize items as a way to make the guidance quicker to apply, but this is not the only solution. They could also have considered making guidance more concise, providing suggested wording or creating tools to speed up writing. Thirdly, although the studies describe their innovations, they do not always describe changes beyond the tool in question (e.g., changes to editorial practice) or how changes are expected to alter behaviour.

0.7 Summary

In summary, medical research is often poorly reported, and this makes it difficult for other researchers to understand, appraise, synthesize, or replicate studies. This, in turn, makes research less useful to patients and potentially harmful if it distorts the evidence base.

I’ve introduced reporting guidelines as recommendations, created by the research community with the aim to improve reporting quality. I’ve described how a system of tools, websites, people, and policies has organically grown around reporting guidelines, and I have argued this system forms a complex behaviour change intervention with the goal of altering what authors write.

I’ve discussed how this system has had only a modest effect on reporting quality, at best. I’ve described how studies exploring modifications to this system are limited because they did not thoroughly explore barriers or facilitators that influence reporting guideline adherence, and lacked a systematic method to identify options to address those influences.

0.8 Aims and Objectives

My aim was to identify and address the influences affecting whether authors adhere to reporting guidelines. I wanted to explore the entire reporting guideline system, and I wanted to be thorough: I wanted to identify as many influences as possible, and as many solutions as possible, before deciding which to implement.

My objectives were:

- To identify influences that may affect reporting guideline impact by synthesising existing research and by evaluating the EQUATOR Network website (addressed in chapters 3 - 5)

- To work with key stakeholders to identify intervention changes to address these influences (addressed in chapters 7 - 8)

- To implement these changes (described in 9)

- To refine the new intervention in response to feedback from authors (addressed in chapter 10)

My thesis bares hallmarks of pragmatism. I used both qualitative and quantitative methods. Constraints (like time and access to participants) influenced my decisions. I balanced participants’ views with my own; I sought to remove my views as much as possible in all chapters except for the workshops I conducted with EQUATOR (chapter 7) and when designing the intervention (chapter 9). I balanced inductive and deductive reasoning; my early chapters were exploratory and inductive, and my later chapters became increasingly deductive as my focus narrowed and I relied more heavily on frameworks.

0.9 Thesis structure

I have structured my thesis in three parts. Part 1 (chapters 3 - 5) addresses my first objective, part 2 (chapters 6 - 9) addresses my second and third, and part 3 (chapter 10) addresses my fourth objective. I’ll now give a short summary of each chapter.

Chapter 2 - Reflections on starting my DPhil

I reflect on my background, my prior held opinions, and those of my supervision team, and how these may have influenced the direction of this thesis.

Chapter 3 - What influences researchers when using reporting guidelines? Part 1: A thematic synthesis

The next three chapters pertain to my first objective - to identify possible influences affecting whether authors adhere to reporting guidelines. Chapter 3 describes a thematic synthesis of qualitative studies exploring authors’ experiences of using reporting guidelines.

Chapter 4 - What influences researchers when using reporting guidelines? Part 2: Describing the questions asked in quantitative surveys

This chapter builds on the previous one by identifying additional possible influences from the content of quantitative survey questions.

Chapter 5 - Describing who visits EQUATOR’s website for disseminating reporting guidelines, and how visitors use it

This chapter describes the characteristics and behaviour of visitors to the EQUATOR Network website. This website, although an important piece reporting guideline infrastructure, was rarely explored by studies reviewed in the previous two chapters. This evaluation adds evidence and context to influences identified in the previous chapters, and goes on to inform discussions and decisions in later chapters.

Chapter 6 - Selecting the Behaviour Change Wheel framework

The next 3 chapters pertain to my second objective — identifying intervention changes. Chapter 6 introduces the Behaviour Change Wheel, which is a framework for designing behaviour change interventions. I explain how my thesis gained form at this point in time; my view of reporting guidelines as a system crystallised, and Charlotte Albury joined my supervision team as my plans took an unexpected qualitative turn. I wanted my thesis to accurately reflect the twists of my DPhil, and so instead of introducing my chosen framework at start of my thesis (which may be customary), I introduce it in the middle to more accurately reflect my journey.

Chapter 7 - Following the behaviour change wheel guide: Workshops with reporting guideline experts

This chapter describes how I led workshops with reporting guideline experts from the UK EQUATOR Centre to identify intervention options using the Behaviour Change Wheel framework.

Chapter 8 - Generating ideas to address influences: focus groups with reporting guideline developers, advocates, and publishers

This chapter reports focus groups where I collected ideas on how intervention options could be realised.

Chapter 9 - Defining intervention components and developing a prototype

In this chapter, I bring together the outputs of the previous two chapters to create a table of intervention components. I then describe how I implemented these components by redesigning a reporting guideline (SRQR), the EQUATOR Network website’s home page, and creating a web platform for reporting guideline developers to create and disseminate resources.

Chapter 10 - Refining the intervention: interviewing authors to identify deficient intervention components

In this chapter I address my final objective by refining the redesigned reporting guideline and home page in response to feedback from authors. I describe a qualitative study where I used observation, think aloud, structured interviews, and a writing evaluation, to gather feedback from an international sample of authors.

Chapter 11 - Discussion

After summarising my findings and outputs, I reflect on the strengths and limitations of my approach, next steps, and the impact I hope my work will have on scholarly publishing, the meta-research community, and implications for policy.

Figure 1 summarises the outputs of each research chapter and shows how chapters fed into each other.